Model: An article recently appeared in the journal ‘Nature Physics’ about the lure of models. It is worth noting the following from the article:

“models should come with safety warnings. Most people realize that model predictions can be wrong, and often are wrong. Yet misplaced confidence can draw individuals, groups or entire governments into making painfully poor decisions. Worse, as Erica Thompson of the London School of Economics examines in her incisive new book Escape from Model Land, models have an almost magical capacity to lure their users into mistaking the sharp, tidy and analytically accessible world of a model with actual reality, with unfortunate consequences.”

https://www.nature.com/articles/s41567-022-01906-3

This is a perfect description of the modelling used to predict the risk to wild salmon from sea lice and is highly relevant to the following commentary.

Finally: The long-awaited SPILLS report was finally published last week. The project team do not appear to offer an overall conclusion but the report’s key findings are that the lice dispersal models worked reasonably well. However, what is absolutely clear is that none of the observational data generated during the project supports this conclusion.

The project included the collection and analysis of the following data sources to validate the sea lice dispersal models.

- Counts of lice infesting salmon held in moored sentinel cages, which are designed to measure infective pressure of lice on fish.

The report concluded that the models produced a reasonable match to sentinel cage data when lice were relatively abundant. In this case, the autumn of 2011 but it also means that they did not produce a reasonable match in the spring of 2011, 2012, and 2013 and the autumn of 2012 and 2013 or 83% of the time.

- Lice captured in plankton tows, which are designed to measure sea lice in the water column.

Sea lice were detected in just 4.8% of 372 samples. However, as expected, the report states that ‘Failure to capture sea lice larvae does not equate to an absence in the water column’.

- Lice counts on wild fish.

Neither the summary report, nor the work package reports state that over five separate samplings during 2021, just 13 fish were caught. The number of fish caught per sampling ranged from 2 to 4. The SFFCC protocol on fish sampling sets a minimum of 30 fish per sample, so these numbers fall well short.

The final report’s forward, written by the steering group, states that this is a first attempt at a detailed configuration, calibration and validation exercise to compare the three models used in the project. Unfortunately, the report does not appear to reach any conclusion as to whether the project was successful in its aims or not. I suggest it was not. In fact, it largely failed to validate any the models with real data. The only firm conclusion that can be drawn is that the models bear no resemblance to reality.

In the forward, the steering group write that the models should predict levels and distribution of sea lice in the environment as they spread from open-pen aquaculture facilities. Using a term favoured by MSS, the body of scientific evidence has yet to demonstrate that sea lice from salmon farms actually spread throughout the environment at all, let alone in line with the various particle studies incorporated into these models. Perhaps the real reason that so few larval lice can be found in the water column is simply they are not there.

The steering group go on to say that the ‘model evaluation in the project was hampered by uncertainties.’ Of course, as already stated, the biggest uncertainty is whether sea lice behave in the way that the model predicts. They add that had better data been available, it is likely that the validation of the models would have been improved. However, had better data been available, it could equally have shown that the assumptions that the models make are simply wrong.

The problem is that none of the steering group is a parasitologist and in my opinion some of them are blinkered by the long-established narrative that sea lice from salmon farms are damaging to wild fish. Yet, the only ‘evidence’ to date amounts to a couple of papers cited by Marine Scotland Science in their Summary of Science and these don’t actually prove that salmon farms are having a deleterious effect on wild fish. Unfortunately, MSS have so far refused to discuss why these papers do not show what they claim.

The SPILLS project team consists of 21 personnel together with two collaborators named on one of the reports, plus four members of the steering group. As far as I can ascertain, not one of these people are parasitologists. This would seem a major deficiency given this is a project about a parasite. How can any conclusions be drawn about this parasite and its impacts without an extensive knowledge of all aspects of the parasite from biology to ecology etc? I have previously explored this deficiency by submitting a FOI to request the name of the project parasitologist. The reply was that the provision of this information would contravene data protection regulations because it is the personal data of a third party!!! As the reply did not state that the project did not include the services of a parasitologist, I can only assume that there must be one, but their identify remains a mystery. Alternatively, no-one now wants to admit that the inclusion of a parasitologist in the team of a project about parasites wasn’t considered sufficiently important to involve one. It is also worth mentioning that the other major project partner was SAMS, and it is unlikely that they have a parasitologist in their team because when they were asked to produce a report on the impacts of salmon farming by a Scottish Parliamentary committee, the section on sea lice was written by a fish geneticist.

Notwithstanding the inclusion of a parasitologist, the summary report states that the project includes Fisheries Management Scotland, yet none of the 21 people listed in the project represents that organisation. The only named representative of FMS is Dr Alan Wells and he is listed as on the steering group. It is unclear why he is part of this group except for his determination to shackle the salmon farming industry. If a highly qualified scientist from the salmon farming industry appeared on a steering group of a wild salmon project, there would be a massive outcry, but it seems that the FMS must be represented on any and every committee or group about salmon farming. It’s a pity that FMS don’t seem to be more concerned about putting their own house in order, particularly about the accuracy of the catch data, before worrying about issues relating to the salmon farming industry.

Also on the steering group is Dr John Armstrong from MSS. He is co-author of a 2009 paper cited as observational data in the Scottish Government’s summary of sea lice science. This states:

‘Catches of wild salmon after the late 1980s decline on the Scottish Atlantic coast relative to elsewhere (Vøllestad et al. 2009). The area covers the majority of mainland salmon farms although the authors stressed that this did not prove a causative link with aquaculture.’

These three lines sum up the whole of the evidence used against the salmon farming industry. It is therefore not surprising that MSS don’t want to talk about the science. There is not a shred of evidence linking wild fish declines to salmon farming, except in the minds of the salmon angling fraternity. Even this SPILLS project has failed to provide any evidence so doubtless there will be a reluctance to discuss the findings of this project too.

The third member of the steering group is Peter Pollard from SEPA whose comments I won’t repeat here except to say that he must have seen some new evidence since 2020 to change his earlier view but he has not shared this with the wider audience. This brings me back to the evidence collected for the SPILLS project.

Firstly, the question of larval sea lice in the water column. The project reported that 18 of 372 samples were found to contain larval sea lice. The report begins by suggesting that ‘care must be taken to understand the implications of these low capture rates and low rates of identification with the key point of absence or capture or identification not equating to absence in the water at the time of sampling’.

How long is this narrative to persist? Why cannot we start a discussion about the possibility that larval sea lice are not dispersed as the model suggests and that that the larvae that are found are there accidently? Surely any model that is forced on the industry must be validated with real data. Surely it cannot be acceptable to appear to validate a model based on the perception that the larvae must be there even if they cannot be found.

The report also discusses the difficulty in identification of larval sea lice collected by plankton tows. Despite extensive training and consultation with a taxonomy expert, the project was not able to identify the larvae with confidence. There are two contributors named on this report who appear to be master’s students and I wonder whether they were the ones tasked with larval identification. Given that Marine Scotland operate long-term zooplankton monitoring programme as part of the Scottish Coastal Observatory, it is unclear why this expertise was not included in the project.

The second set of data comes from the 2011 to 2013 sentinel cage data. Unlike MSS, I do not believe that sentinel cages actually reflect the infective pressure of sea lice from farms because I believe that the movement of wild fish has a greater role to play than is considered. However, the reliance on ten-year-old data is not reflective of advances made by the salmon farming sector over the intervening period and if sentinel cages are the best way to assess infective pressure, then a new set of data should have been produced.

The sentinel cage data hardly gets a mention in the main report or any of the additional work package reports. Work package report 4 refers to the data source on the Scottish Government website but makes little other reference except to compare the data with particle tracking outputs. However, comparisons are only for 2011 and 2013. There is no mention of what happened to 2012. Whilst there are several comparisons of the data with the model, there is no analysis of the actual data sentinel cage data. I am led to believe that this is because the data is so old, it has been previously discussed in published papers.

The project report key findings states: overall the sea lice dispersal models produced a reasonable match to the most suitable infection pressure (sentinel cage) data. This was when lice were most abundant which was autumn 2011.

To seek more detail about the sentinel cage data means reference to the Scottish Government website for ‘Loch Linnhe biological sampling data product 2011-2013’. The site refers to two published papers that include direct inclusion of data but full access to both costs $118, although 48 hour access is available (no downloading or printing) for $30.

The first – Salama et al. (2013) refers only to data from spring 2011. This is shows an average of 15 lice per cage of 50 fish with a mean abundance of 0.33 settled lice per fish. The main conclusion was that there was variation in lice counts between the sentinel cages but overall, that there were low numbers of settled lice in May 2011.

The second paper cited, also by Salama et al. but in 2018, did not appear to analyse the data and simply used the mean abundance from the six seasons data to evaluate their model.

As the actual numbers do not appear in the paper nor the project results, I have recalculated the abundances for the six seasons as in the following table.

It is worth mentioning that there is of variation of the sample size measured given that each cage was stocked with the same number of fish.

| Season and year | Mean abundance | Sample size |

| Spring 2011 | 0.28 | 457 |

| Autumn 2011 | 6.19 | 612 |

| Spring 2012 | 0.01 | 579 |

| Autumn 2012 | 0.01 | 890 |

| Spring 2013 | 0.35 | 586 |

| Autumn 2013 | 2.27 | 764 |

Only data from the autumn 2011 provided sufficient lice to fit the model. Whilst the autumn 2013 numbers were somewhat elevated, the number was clearly too low for the model. However, the main point about the dispersal model is that they are intended to be used during the smolt migration in the spring and not autumn. The model does not work in the spring and thus has no relevance.

Unlike the project, I have also calculated the mean abundance for each cage for both spring and autumn seasons. This confirms Salama et al. (2013) findings that there was a great deal of variation between cages.

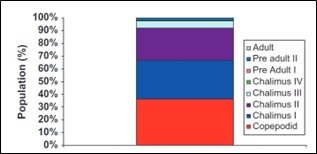

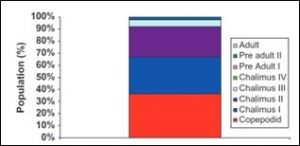

Finally on the subject of sentinel cages. The Salama et al. (2013) paper includes the following graph. This shows the percentage of lice stages found in Spring 2011 on sentinel caged fish.

According to MSS, a seven-day deployment of the sentinel cage is used to ensure that larval lice do not develop sufficiently to ensure all lice are from outside the cages. Clearly, pre-adult and adults were identified, that do not fit into this narrative.

Finally, I come to the wild fish data. This features on just one and a third pages of the work package report (Final report on sampling and analyses of sea lice larvae in the Shuna Sound region (I), and sampling of sea lice on wild fish in the Shun Sound region (II)). Page 32, line 25 of the report states:

‘However, the sample of trout collected in 2021 was too small to provide useful information to compare with outcomes of the lice dispersal models tested in the SPILLS project.’

Yet, the key points on page 4, line 4 of the same report states:

‘Although the low number of wild fish samples analysed in 2021 made firm conclusions difficult to draw, the limited data suggested that there was a lice-related risk to sea trout in the Sound of Shuna Management Area.’

Nowhere in the report is there any claim that the lice counts on the 13 wild fish sampled suggest a lice-related risk to sea trout.

In fact, the claim was taken from the Argyll Fisheries Trust report ‘Sea Lice Burdens of sea trout at Sound of Shuna Argyll 2021’ The main findings, line 12 of page 2 state:

‘The low numbers of samples analysed in 2021 make firm conclusions difficult to be drawn, but the limited data suggest that there was a lice-related risk to sea trout in the Sound of Shuna Management Area during the second year of farm production.’

This assumption is based on the misplaced use of Taranger’s estimation of mortality. I have previously had a long conversation with Dr Taranger and even he acknowledged that his original findings require a great deal more development. Taranger recommended a minimum sample size of 100 fish, a fact conveniently ignored by the various fisheries trusts who use his model. The truth is that a sample of 13 fish is absolutely worthless in terms of meaningful results.

However, what the above observation shows is that the findings in this report cannot be taken at face value and need to be fully examined and assessed.

Finally for a project that is effectively looking at the impact of sea lice from salmon farms on wild fish, there is one set of data that has been totally ignored and that is the historical status of wild fish populations in the vicinity to the trial site. Until recently, the only publicly available data was the catch data from local fishery districts as river data was always considered an invasion of proprietor’s privacy. However, since the advent of conservation gradings, MSS have been publishing relevant river data. This set a precedent that means requests can be made for historic data from any river in Scotland. I have submitted a request for the catch data for four rivers around Shuna Sound but MSS have advised me that they require 40 working days to respond to my request. This is because MSS have never transferred the historic paper data to a digital format. If they have to do this now, then clearly, they hadn’t already done so for this project. This is a major deficiency and one I will address when the data becomes available.

If the Scottish Government were hoping that this project would validate the sea lice dispersal model and hence the sea lice risk framework, then they will be disappointed because it fails to do so at every level. The summary report mentions dissemination of data and highlights that some of the findings have been presented at scientific meetings. I very much hope that MSS will be organising a one-day event to present the report’s findings in full and give salmon farmers an opportunity to understand how an unvalidated model will be used to regulate the future growth of the industry.